ARTICLES

NeuralScope Engineering Edition

For mobile devices, which care about battery life, we provide an engineering edition to measure how much energy has been consumed during a test application. The edition is named as "NeuralScope AI Benchmark Engineering Edition." NeuralScope AI Benchmark Engineering Edition is a special edition that focuses helping engineers to create a better runtime for determining the power consumption for their deep learning accelerating solution. Users who wants to measure the power consumption of the deep learning applications through this engineering edition need an external power monitor. This edition has been added with idle periods between each test application in NeuralScope AI Benchmark. As shown in Figure 1., the idle periods consume very little power, where users could know the power consumption of each test application. With the idle periods, users can determine and understand when a test application starts (as Figure 2 shows) and when a test application finishes (as Figure3 shows).

You are using deep learning on your mobile!

Deep learning is actually a behind-the-scenes helper to make our life more convenient! It’s based on multi-layer feature extractions to know what the data contain. This cloud be applied on various data types, such as figures, voices, and texts.

Android Neural Network API based Mobile Scoring APP

With deep learning and AI technology rapidly growing, a lot of deep learning frameworks has been developed to support training a solution. After having a well-trained model, a device would be chosen to perform huge computations and parameter access of the model. However, that is a big challenge, like power consumption and performance issues, in power-constrained edge devices. Recently, mobile devices are enabled with AI capability by adopting advance deep learning model and powerful chips. And, the mobile operating system, Android, develops a unified neural network API infterface(NNAPI) for running computationally intensive operations for machine learning on mobile devices. It gives deep learning applications an opportunity to realize them on the mobile devices. In addition, for Android AI application developer, it provide them to dispatch their execution tasks to different processors easier by using tensorflow-lite to call NNAPI. To evaluate the abilities of deep learning mobile devices, AI System Benchmarking and Tuning Lab (人工智慧系統檢測中心), National Chiao Tung University, Taiwan has announced this benchmark for deep learning neural network on mobile devices, named NeuralScope benchmark. This is based on Android NNAPI to test computational capacities of mobile devices.

The trend for Mobile AI Application

Since 2017, Mobile devices are enabled with AI capabilities by adopting advance deep learning model and powerful chips. Huawei Kirin970, Apple Bionic A11, and Mediatek P60 provides a dedicated AI processor to achieve high performance and power efficient for mobile phones

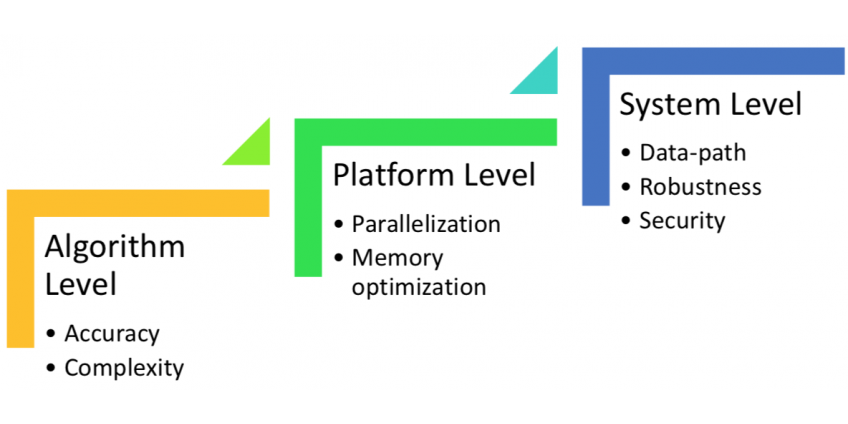

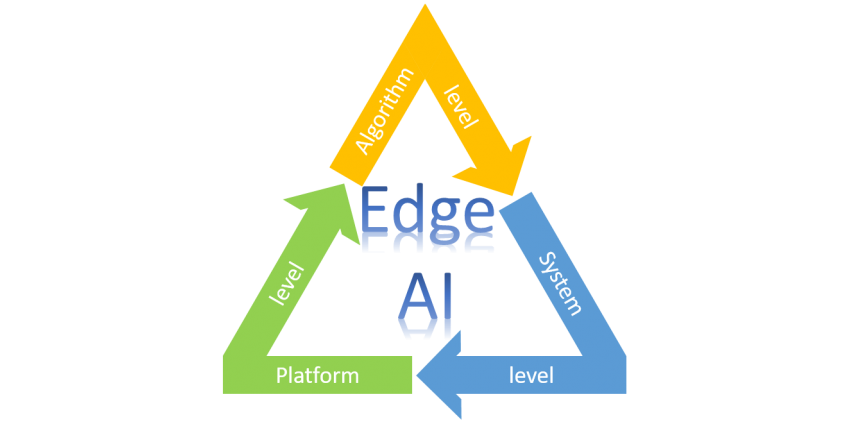

The Challenges for building AI into Edge AI apps

Mobile and Embedded system apps are continuously advancing and Artificial Intelligence (AI) is ready to touch our lives in a huge way. Numerous apps are evolving with the research in energy consumption on Deep Learning (DL) and the growing customer demands, such as smartphone apps, smart home, and self-driving vehicles. These demands also motivate products to process AI-enhancements at the ‘Edge device’ rather than rely solely on Cloud-connected support.